Note: This is part 1 of a multi-part series.

Part 2 is here.

In this post, we will create an AI that will learn to avoid obstacles and move through an open path. We will build an AI agent using a Neural network model and train it using a Genetic algorithm.

A brief overview of the system

The neural network is the mathematical model that consists of neurons connected. This model is a complex equation that has multiple variables. The value of these variables is random at first, which means whatever we feed to this equation, it returns random and unpredictable feedback. Our job is to tweak those variables, so that, for a certain input, we receive desired output. A neural network resembles a mind with no instruction in it. It is not much of use on its own. For it to function properly it must be trained. We will use a Genetic algorithm to train it.

The generic algorithm is based on Charles Darwin's theory of natural evolution. Creatures that are fit to survive a given environment live to create offspring and those unfit simply cease to exist. This improves the upcoming generation thus making the chance of their survival better.

So we have many creatures with a mind (Neural network models). We pick the best brains to create offspring with them so we have better creatures than the previous generation (Genetic algorithm). We keep on repeating this until we get intelligent creatures.

In this part, we will create a neural network model for path-finding AI. In the subsequent part, this model will be trained and implemented in Unity.

Neural Network

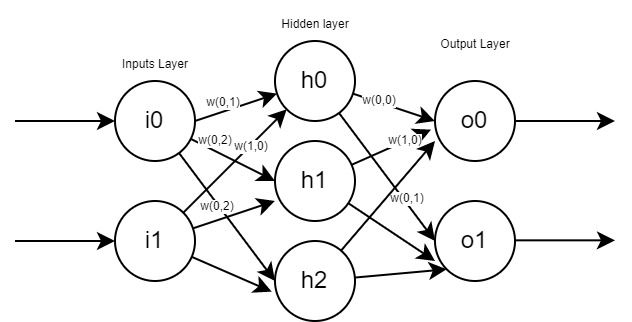

An artificial neural network is a model replicating the model of a natural network of neurons in the human brain. It consists of multiple nodes that are connected. Connected neurons transmit the signal to other connected neural changing their behaviour. The strength of these connections is known as weight(w). Each node has a certain value determining the final value called bias(b).

The typical model of it consists of nodes arranged into different layers. This model always consists of an input layer and an output layer. Between the input and output layer, there may be multiple layers known as the hidden layer. Each of the nodes in a layer is connected to every node in the previous layer.

The value of jth the node at ith layer, vij is calculated as

Here,

V(i-1)jisjthnode of the previous layer. i.e(i-1)thlayerwxyis the weight of the connection between node x and y.bijis the bias of that nodef(x)is an activation function. we will be using sigmoid here. Equation of sigmoid isy=1/(1+e-x)

We calculate values for all nodes until we get to the output layer.

Creating model

This model will have one hidden layer. Input, hidden, output layers can have configurable numbers of nodes in them. Let's create NeuralNetwork class to handle it and remove MonoBehaviour .

public class NeuralNetwork{

}At first, we need to create the required data. Node count in each layer, the weight of connections between nodes of layer, and their bias are needed for this model.

private int nodeCount_ip, nodeCount_hl, nodeCount_op;

private float[][] weight_ip_hl;

private float[][] weight_hl_op;

private float[] bias_hl;

private float[] bias_op;Variable declarations:

nodeCount_ip- input layer nodes,nodeCount_hl- hidden layer node,nodeCount_op- output layer nodesweight_ip_hl- the weight of nodes connecting from the input layer to the hidden layer.weight_ip_hl[i][j]means the weight of connection ofinode in the input layer tojnode of hidden layer- the bias of nodes of the hidden layer.

bias_hl[i]means bias ofinode in the hidden layer - the bias of nodes of the output layer.

bias_op[i]means bias ofinode in output

Now let's create a constructor for it. Data needs to be initialized here according to provided node count in the input, hidden, and output layers. Also, data will be randomized so each model will act differently.

public NeuralNetwork(int inputNode, int outputNode, int hiddenLayerNode) {

nodeCount_ip = inputNode;

nodeCount_hl = hiddenLayerNode;

nodeCount_op = outputNode;

// initialize bias

bias_hl = new float[nodeCount_hl];

bias_op = new float[nodeCount_op];

//initialize weights of connections input->hidden layer

weight_ip_hl = new float[nodeCount_ip][];

for (int i = 0; i < nodeCount_ip; i++) {

weight_ip_hl[i] = new float[nodeCount_hl];

}

// initialize weight of connection hidden layer->output

weight_hl_op = new float[nodeCount_hl][];

for (int i = 0; i < nodeCount_hl; i++) {

weight_hl_op[i] = new float[nodeCount_op];

}

RandomizeValues();

}

// randomize weights and bias values from -1 to 1

public void RandomizeValues() {

for (int i = 0; i < bias_hl.Length; i++) {

bias_hl[i] = Random.Range(-1 f, 1 f);

}

// randomize bias of output layer

for (int i = 0; i < bias_op.Length; i++) {

bias_op[i] = Random.Range(-1 f, 1 f);

}

// randomize weight of ip->hl

for (int i = 0; i < weight_ip_hl.Length; i++) {

for (int j = 0; j < weight_ip_hl[i].Length; j++) {

weight_ip_hl[i][j] = Random.Range(-1 f, 1 f);

}

}

//randomize weight of hl->op

for (int i = 0; i < weight_hl_op.Length; i++) {

for (int j = 0; j < weight_hl_op[i].Length; j++) {

weight_hl_op[i][j] = Random.Range(-1 f, 1 f);

}

}

}Now, create a method to calculate the output when input is fed to this model. We will first calculate the value of the hidden layer node from the input node. After that, the value of the output node is calculated from the hidden layer node. During the calculation of value, the sigmoid function will be used as an activation function.

public float[] ProcessInput(float[] input) {

if (input.Length != nodeCount_ip) return null;

float[] hiddenLayerValues = new float[nodeCount_hl]; //store value of hidden layer

float[] outputValues = new float[nodeCount_op]; //store value of output layer

// calculate value for each nodes in hidden layer

for (int i = 0; i < nodeCount_hl; i++) {

float summation = 0;

//add Weight*nodeValue

for (int j = 0; j < nodeCount_ip; j++) {

summation += weight_ip_hl[j][i] * input[j];

}

// add bias

summation += bias_hl[i];

// apply Activation function

hiddenLayerValues[i] = ActivationFunction(summation);

}

// calculate value for each nodes in output layer

for (int i = 0; i < nodeCount_op; i++) {

float summation = 0;

//add (Weight*nodeValue) for all conected node

for (int j = 0; j < nodeCount_hl; j++) {

summation += weight_hl_op[j][i] * hiddenLayerValues[j];

}

//add bias of this node

summation += bias_op[i];

//apply Activation function

outputValues[i] = ActivationFunction(summation);

}

return outputValues;

}

//use sigmoid as activation function

private float ActivationFunction(float value) {

return (float)(1 / (1 + Math.Exp(-value)));

}We will be processing this model with a Genetic algorithm later. The genetic algorithm requires values to be in the 1D array so we need to convert all weights and bias in the 1D array. Also, setters should be created so that our model can take value in the 1D array and set weights and biases accordingly.

public void SetValues(float[] geneSequence) {

if (geneSequence.Length !=

nodeCount_ip * nodeCount_hl + nodeCount_hl + nodeCount_hl * nodeCount_op + nodeCount_op) return;

int currentIndex = 0; //index of array element to be filled

// first add weights of ip-> hl starting from connection of node 0 of hidden layer

for (int i = 0; i < weight_ip_hl.Length; i++) {

for (int j = 0; j < weight_ip_hl[i].Length; j++) {

weight_ip_hl[i][j] = geneSequence[currentIndex];

currentIndex++;

}

}

// add weight of bias of hidden layer

for (int i = 0; i < bias_hl.Length; i++) {

bias_hl[i] = geneSequence[currentIndex];

currentIndex++;

}

// add weights of hl-> op starting from connection of node 0 of output layer

for (int i = 0; i < weight_hl_op.Length; i++) {

for (int j = 0; j < weight_hl_op[i].Length; j++) {

weight_hl_op[i][j] = geneSequence[currentIndex];

currentIndex++;

}

}

//add weight of bias of output layer

for (int i = 0; i < bias_op.Length; i++) {

bias_op[i] = geneSequence[currentIndex];

currentIndex++;

}

}

public float[] GetGeneSequence() {

float[] geneSequence =

new float[nodeCount_ip * nodeCount_hl + nodeCount_hl + nodeCount_hl * nodeCount_op + nodeCount_op];

int currentIndex = 0;

// get weights from ip->hl

for (int i = 0; i < weight_ip_hl.Length; i++) {

for (int j = 0; j < weight_ip_hl[i].Length; j++) {

geneSequence[currentIndex] = weight_ip_hl[i][j];

currentIndex++;

}

}

// get bias from hl

for (int i = 0; i < bias_hl.Length; i++) {

geneSequence[currentIndex] = bias_hl[i];

currentIndex++;

}

// get weights from hl->op

for (int i = 0; i < weight_hl_op.Length; i++) {

for (int j = 0; j < weight_hl_op[i].Length; j++) {

geneSequence[currentIndex] = weight_hl_op[i][j];

currentIndex++;

}

}

// get bias from op

for (int i = 0; i < bias_op.Length; i++) {

geneSequence[currentIndex] = bias_op[i];

currentIndex++;

}

return geneSequence;

}Here 1D array sequence (gene) of the model will be formatted in order of

- weights of input to the hidden layer

- the bias of hidden layer

- weights of hidden layer to an output layer

- the bias of output layer

Gene sequence in array form is in format,

We have created all the required methods for this model.